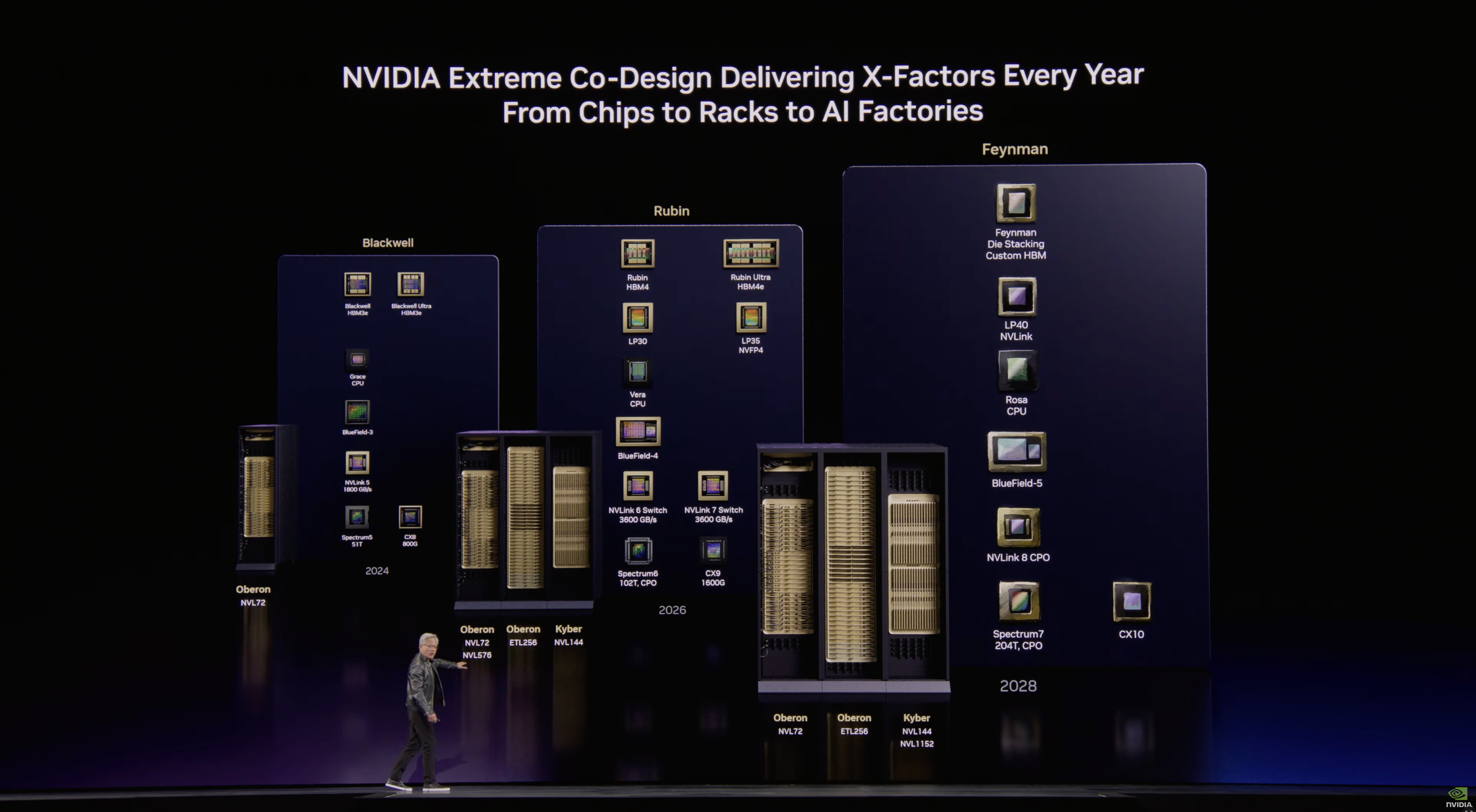

Nvidia presented its updated data center product roadmap at its GPU Technology Conference this week, revealing several surprises but mostly reassuring that the company is on track to introduce a brand-new GPU architecture every couple of years and to update the AI GPU family every year. As it turns out, Nvidia intends to use die stacking and custom HBM memory with its Feynman GPUs, which will also be accompanied by its Rosa CPUs, previously never mentioned in the roadmap.

2026: Rubin, Vera, LP30, BlueField-4

Article continues below

2027: Rubin Ultra, LP35, NVLink 7

Next year, the company plans to update its offerings with the Rubin Ultra AI accelerators, which will feature four compute chiplets and be equipped with 1 TB of HBM4E memory, thus dramatically increasing performance compared to this year's Rubin. In addition, these GPU accelerators will be mated with the Groq LP35 LPU, which will support the NVFP4 data format and therefore improve performance.

Yet another tangible performance improvement for Nvidia's AI platforms is the introduction of the company's Kyber NVL144 rack-scale solution, which will pack 144 Rubin Ultra GPU packages (enabled by an NVLink 7 switch) and therefore offer at least 4X performance improvement compared to Oberon NVL72 racks with 72 Blackwell GPU packages.

2028: Feynman, Rosa, LP40, NVLink goes optics

Nvidia's data center portfolio will improve in 2027 by increasing the number of GPUs per rack (i.e., quantitative improvements) and introducing a new LPU with NVFP4 support. The company's 2028 data center products will be based on all-new architectures that will bring qualitative improvements to the company's products.

"The next generation from here is Feynman," said Jensen Huang, chief executive of Nvidia, at the GTC. "Feynman has a new GPU, of course; it also has a new LPU LP40 […] now uniting the scale of Nvidia and the Groq building together LP40, it is going to be incredible. A brand-new CPU called Ros, short for Rosalyn, Bluefield-5, which connects the next CPU with the next SuperNIC CX10. We will have Kyber, which is copper scale up, and we will have Kyber CPO scale-up. So, for the first time we will scale up with both copper and co-packaged optics."

First up, Nvidia's Feynman data center GPU will adopt die stacking, which will enable a new way for the company to scale performance. Secondly, Feynman GPUs will also use custom high-bandwidth memory (most likely a variant of C-HBM4E), which will likely enable Nvidia to boost HBM capacity beyond 1 TB per GPU package and increase memory bandwidth.

Thirdly, the Feynman platforms will be powered by Rosa CPUs, Nvidia's next-generation processors developed in-house with the focus on ultimate single-thread performance. The emergence of Rosa shows that the company has shortened its CPU development cycle from four years to two (probably by introducing a new design team), putting it on par with leading CPU developers AMD and Intel, which tend to release new microarchitectures every couple of years.

Fourthly, this platform will also integrate the LP40 LPU, which will not only support Nvidia's NVFP4 format but also connect to other system components using the NVLink protocol, thereby integrating Groq hardware with Nvidia's GPUs.

Fifthly, the Feynman platform will also be the first one to adopt NVLink switches with co-packaged optics, which will enable optical interconnections using the NVLink protocol (they are not impossible today, but CPO makes them significantly easier and cheaper to implement). Optical interconnects will enable Nvidia to increase scale-up world size of its rack-scale solutions to 576 GPU packages (using Oberon chassis) or even 1152 GPU packages (using Kyber chassis), which will make the company's rack-scale systems even more competitive against alternative solutions like AMD's Instinct or custom accelerators deployed by hyperscalers than they are today.

Last but not least, Nvidia plans to introduce BlueField 5 DPU, 7th Generation SpectrumX Ethernet with co-packaged optics, as well as ConnectX 10 SuperNIC in 2028.

Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

3 weeks ago

106

3 weeks ago

106

English (US) ·

English (US) ·