Along with new Xeons and vPro platforms, Intel is launching a larger configuration of its Battlemage GPU for the first time, but it's not targeted at gaming. Instead, the new Arc Pro B70 and B65 cards bring options for more compute horsepower and larger memory capacities to users of pro apps and local AI inference workloads on Intel's hardware-software stack.

The first big Battlemage product, the Arc Pro B70, features 32 Xe Cores running at a rated 2800 MHz for a theoretical 22.9 TFLOPS of FP32 compute performance. Intel hooks it up to 32GB of 19 Gbps GDDR6 memory on a 256-bit bus to enable 608 GB/s of bandwidth.

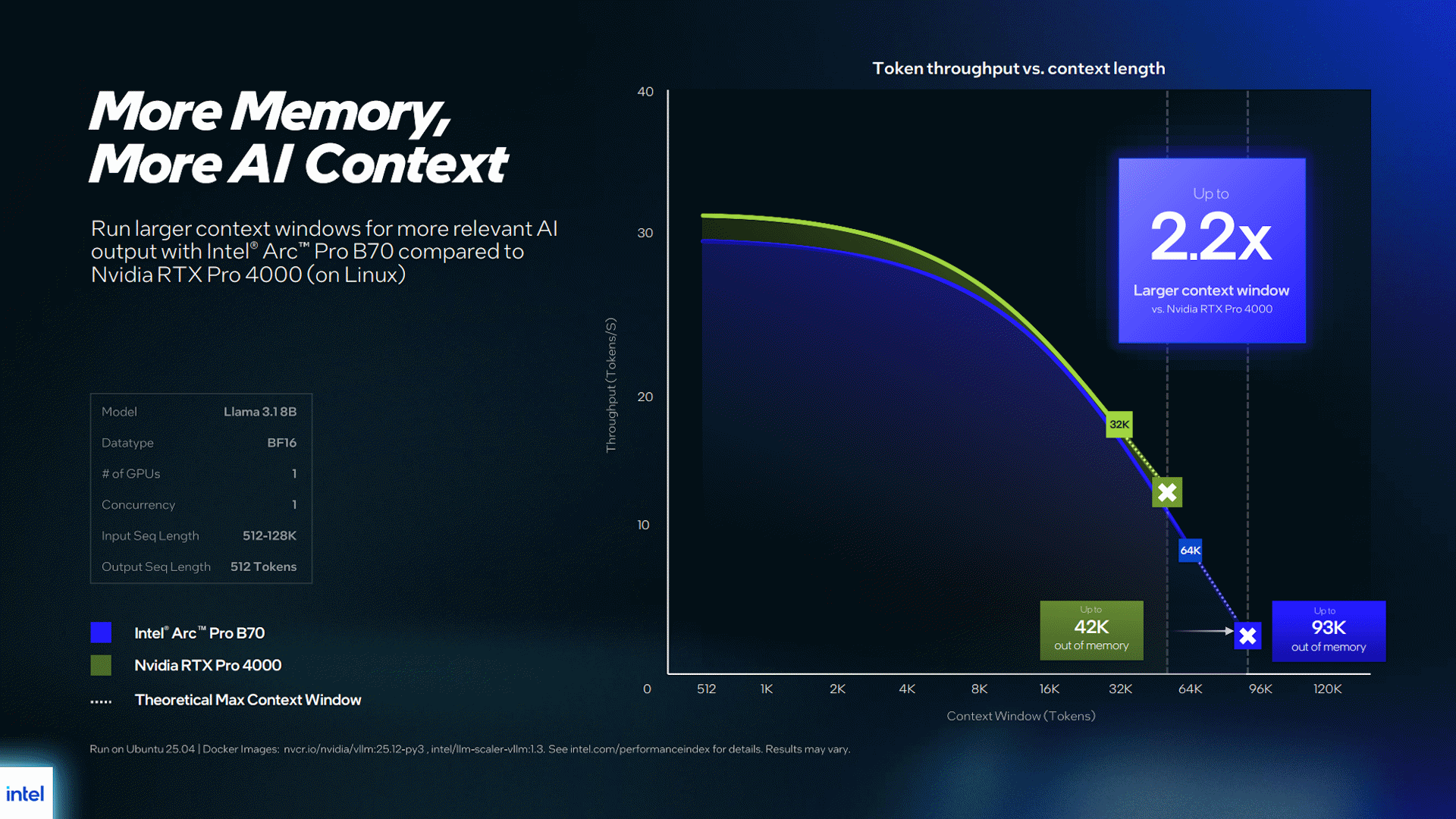

Both the relatively large VRAM capacity and higher memory bandwidth of this card are important for LLM inference workloads, where being able to fit both models and context in GPU-local memory is critical to achieving the best performance.

Article continues below

The Arc Pro B70 can be targeted at a wide power envelope of 160W to 290W to support a wide range of cooling designs and system form factors. Intel says this card will start at $949 for its own reference design, and partner cards will be available from brands including ARKN, ASRock, Gunnir, Maxsun, and Sparkle.

The Arc Pro B65 keeps the 32GB of memory and 608 GB/s memory bandwidth of its stablemate, but drops down to just 20 Xe Cores of compute capacity—identical at a high level to the existing Arc Pro B60.

That large gulf in raw compute compared to the B70 is likely meant to appeal to users of professional and creative applications that can benefit from more memory than lesser Arc Pro cards, but not the extra horsepower. It could also appeal to local LLM enthusiasts chasing memory capacity and bandwidth on the cheap.

Intel isn't announcing a price for the B65 today, but it says the card will be available in mid-April.

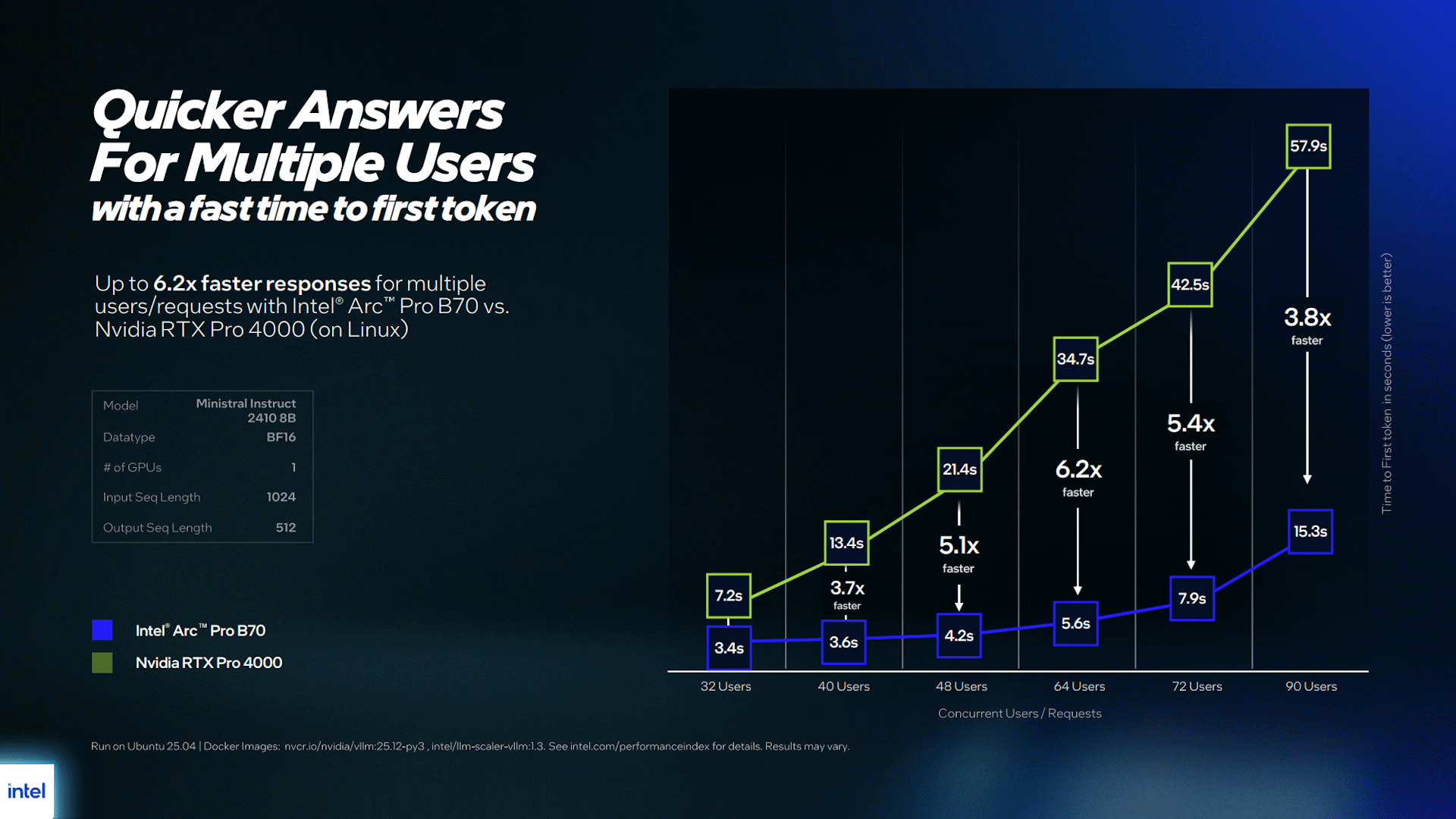

Intel positions the B70 against Nvidia's $1,800 RTX Pro 4000 24GB, the second-cheapest Blackwell workstation card so far, and highlights the B70's advantages against that product for larger context windows and time-to-first-token latency for large numbers of concurrent users.

Intel mostly charts its wins against the RTX Pro 4000 using models with BF16 quantizations, whose higher potential accuracy might be desirable in some use cases but also obscures the Blackwell card's potential performance advantages with increasingly popular lower-precision data types like Nvidia's own NVFP4. The XMX matrix acceleration of Battlemage only extends down to FP16 and INT8 data types, while Blackwell supports a much wider range of reduced-precision formats.

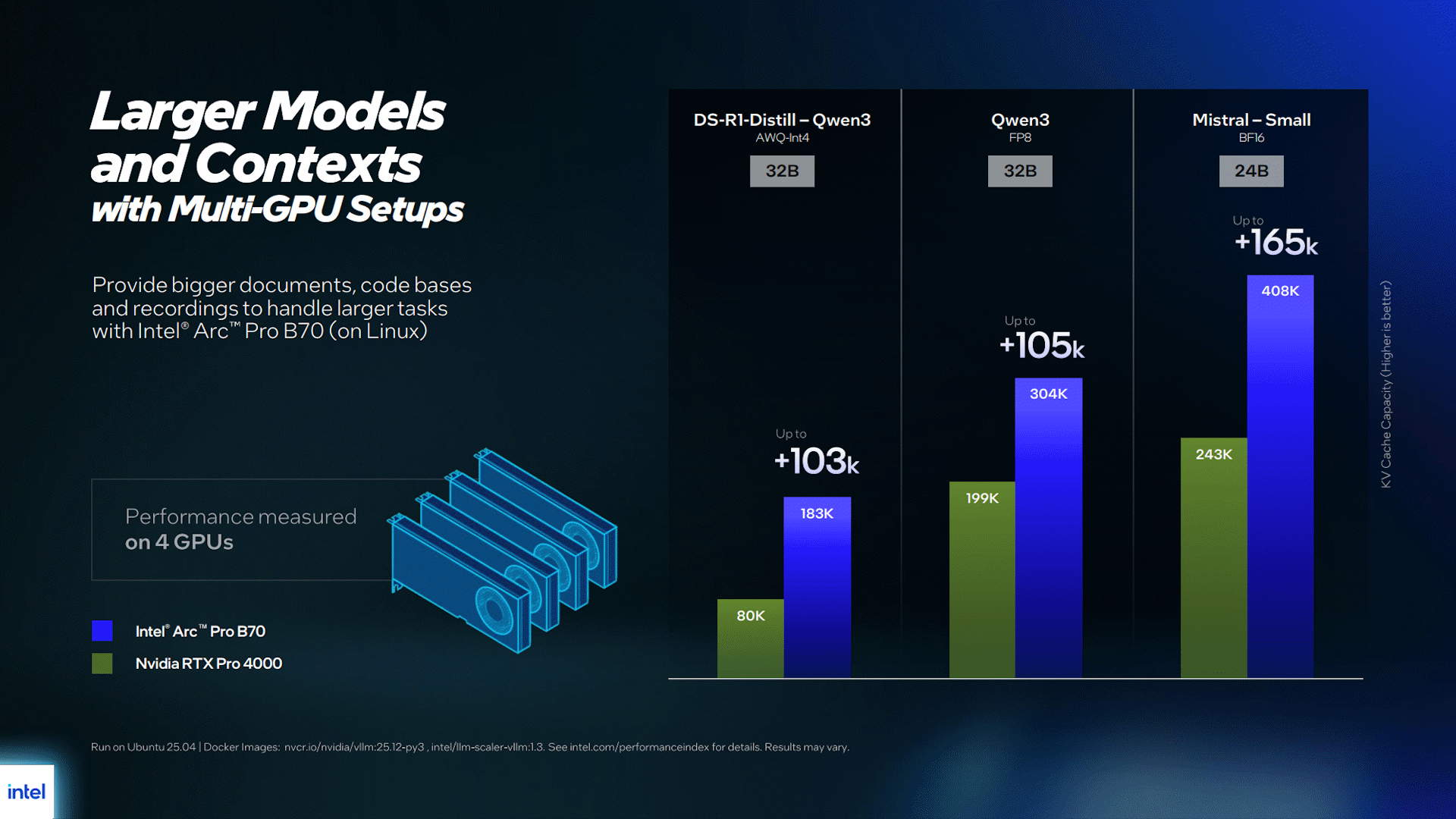

Intel also highlights the multi-GPU support of its software stack, which lets interested parties scale up LLM serving across multiple Arc Pro cards to increase memory capacity for larger context windows, larger models, or both.

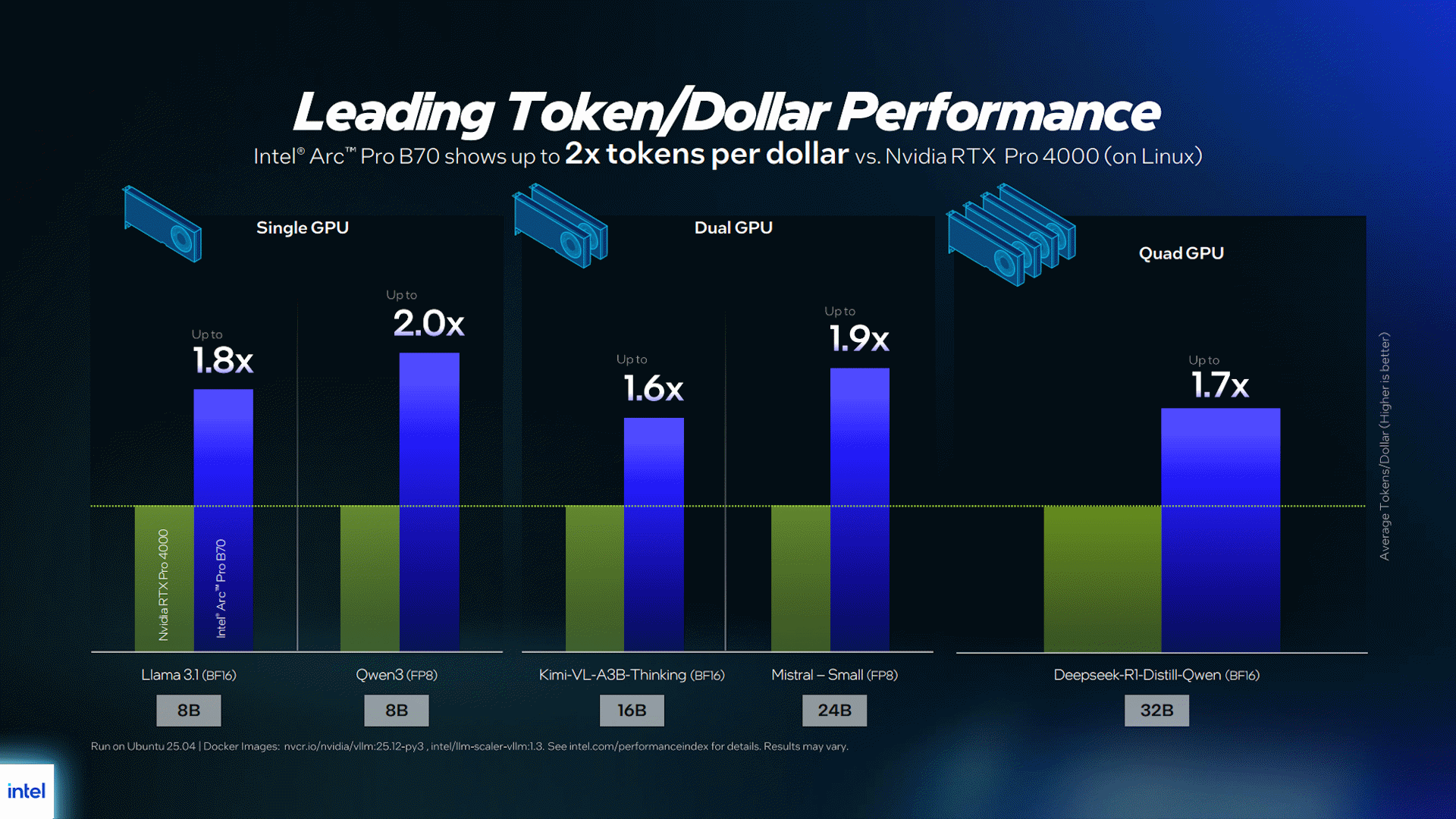

Intel further emphasizes the advantages of its platform for cost-per-token across a range of models, and indeed, at $949 apiece, any number of Arc Pro B70s would ring in for less than the $1,800 RTX Pro 4000. Any tokens you get out of a B70 will naturally be cheaper by that math. The Arc Pro B70 also undercuts AMD's $,1299 Radeon AI Pro R9700, which until now has been another relatively cheap way to get to 32GB on a local AI card.

But it's worth noting that Nvidia has several other RTX Pro cards above the RTX Pro 4000 in its lineup that allow AI systems architects to precisely tailor memory and compute requirements to given workloads, so it might not always be necessary for those folks to spread workloads across multiple cards this way. And that same breadth of offerings means that those working with Nvidia server GPUs can scale up the capabilities of those systems further than Intel's product stack currently allows.

Nvidia and its partners have also long been in the business of selling eight-GPU servers, while Intel didn't highlight configurations extending beyond four GPUs in its presentation.

It's also worth remembering that hardware alone does not an AI system make, as our own local AI experiments have demonstrated. Nvidia's CUDA moat remains wide, and buyers considering an Arc Pro-powered solution would need to account for the potential time and costs involved in handling issues of application support and stability on Intel's platform.

For intrepid AI explorers undeterred by potentially choppy software seas, the relatively low cost of entry on Intel's platform for organizations just trying to spin up an experimental on-premise AI server for local usage might be appealing. But more seasoned AI developers working with an eye toward scaling up their applications both locally and in the cloud will likely want to stick with systems built around Nvidia's offerings for reasons of compatibility, scalability, and TCO.

It remains to be seen whether Intel will ever deploy big Battlemage for gaming, but given the current silicon and memory supply crunch and the midrange performance ballpark where this GPU might land for gaming, it seems unlikely that Intel could sell a gaming-first version of this card profitably.

The AI and professional markets allow for higher prices and better margins, and given CEO Lip-Bu Tan's stated goals for the company, we expect that's where bigger Battlemage will stay. But anything could happen in today's crazy tech landscape.

Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

3 weeks ago

32

3 weeks ago

32

English (US) ·

English (US) ·