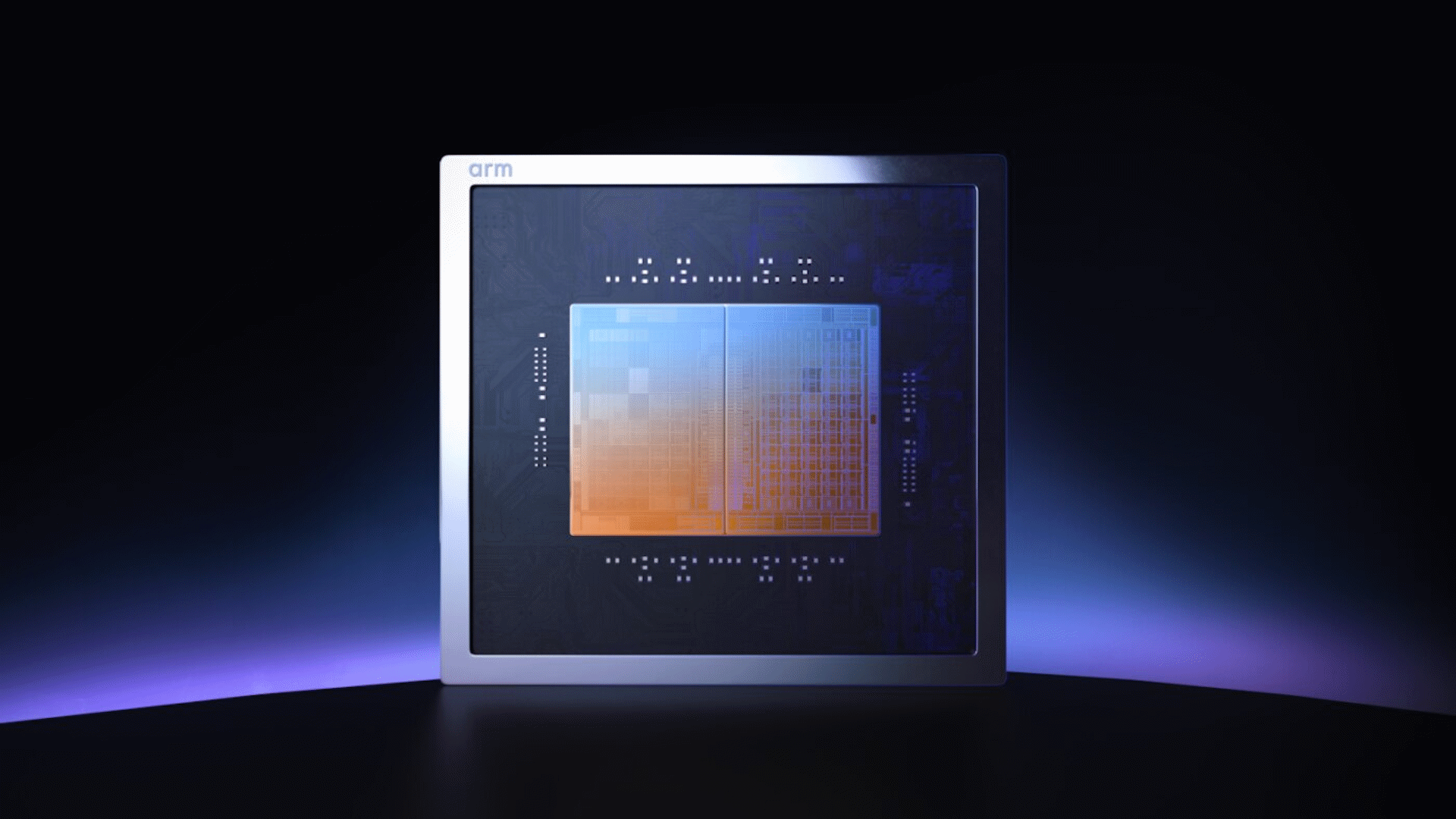

Arm today announced the AGI CPU, an up-to 136-core data center processor family that the company designed and will sell as finished silicon. The chip, built on TSMC's 3nm process with Neoverse V3 cores, was co-developed with Meta and represents the first time in Arm's 35-year history that the company has shipped its own production processor rather than licensing IP to partners.

The AGI CPU has been designed for what Arm calls "agentic AI infrastructure," the CPU-side orchestration work required to coordinate accelerators and manage data movement in large-scale AI deployments.

136 Neoverse V3 cores at 300 watts

The chip packs up to 136 Neoverse V3 cores running at up to 3.2 GHz all-core and 3.7 GHz boost across two dies, all within a 300-watt TDP. It supports 12 channels of DDR5 memory at up to 8800 MT/s, delivering more than 800 GB/s of aggregate memory bandwidth or 6GB/s per core with a target of sub-100ns latency. I/O includes 96 PCIe Gen6 lanes and native CXL 3.0 support for memory expansion and pooling.

Article continues below

Arm's reference platform is a 10U dual-node server compliant with the Open Compute Project's DC-MHS standard. Two AGI CPUs fit per blade, and a standard air-cooled 36kW rack holds 30 blades for 8,160 cores total. Arm has also partnered with Supermicro on a liquid-cooled 200kW configuration that houses 336 chips and more than 45,000 cores.

Arm claims the AGI CPU delivers more than two times the performance per rack compared to the latest x86 platforms. That figure is, of course, based on the company’s own internal estimates at this stage, not independent benchmarks.

GPUs have made most of the headlines surrounding AI hardware to date, but there’s a demand for more powerful general-purpose compute as agentic systems like OpenClaw explode in popularity. Arm is clearly hoping it can meet and cash in on this demand — and hopefully that won’t be to the detriment of non-AI customers, who seem to have long since been forgotten by the likes of Nvidia and Micron.

OpenAI among early customers

Meta served as the lead partner on the project and plans to deploy the AGI CPU alongside its custom MTIA accelerators. Santosh Janardhan, head of infrastructure at Meta, said the two companies worked together on the chip and are committed to a multi-generation roadmap.

Beyond Meta, Arm confirmed commercial commitments from Cerebras, Cloudflare, F5, OpenAI, Positron, Rebellions, SAP, and SK Telecom. Sachin Katti, head of industrial compute at OpenAI, said the AGI CPU will play a role in OpenAI's infrastructure by strengthening the orchestration layer that coordinates large-scale AI workloads.

Arm has historically operated as an IP licensing company. Its partners, from Apple to Nvidia to AWS, design their own chips using Arm's instruction set architecture and core designs. The AGI CPU adds a third option alongside IP licensing and Arm's Compute Subsystems (CSS) program: Arm-designed, production-ready silicon that customers can deploy directly.

Arm said the AGI CPU product line will continue in parallel with the Arm Neoverse CSS product roadmap, and that follow-on products are already committed. The company seems keen to point out that this is an additive move rather than a pivot that competes with existing licensees, though how Arm manages that as it sells chips into the same data centers as Nvidia Grace, AWS Graviton, Google Axion, and Microsoft Cobalt remains to be seen.

Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

3 weeks ago

39

3 weeks ago

39

English (US) ·

English (US) ·