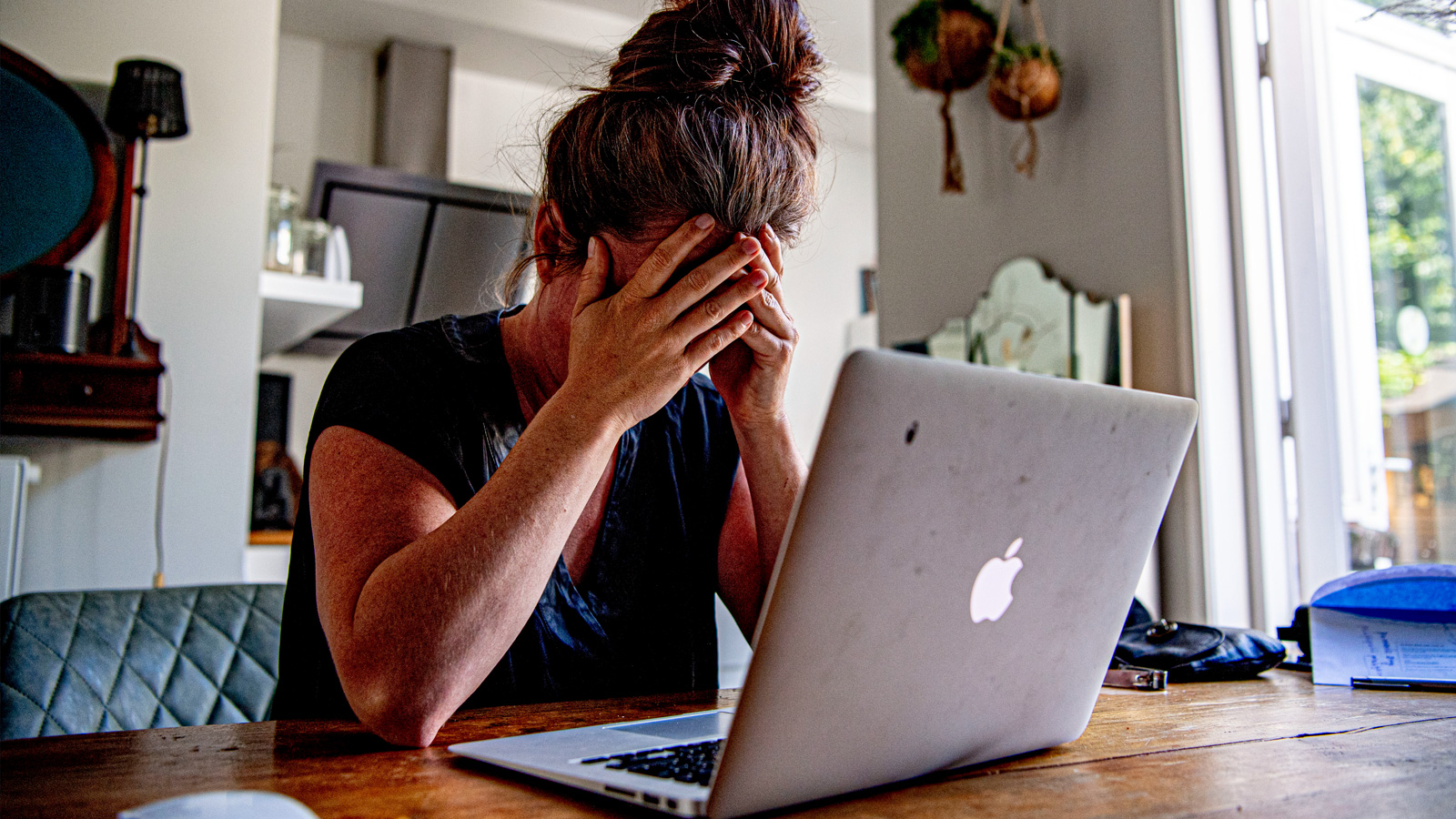

The burning question at the heart of the AI revolution in the workplace is ultimately: Is it worth it? Does productivity improve? Do costs come down? Is it remotely as progressive and transformative as the AI developers claim? A new study published in the Harvard Business Review suggests that although AI has the potential to marginally improve productivity, it also leads to workers taking on more pressure, resulting in more unforced errors and more frequent employee burnout.

The source of this success and concern didn't come from the employers, either, but the employees themselves. This suggests that even in companies where AI use isn't mandated or even explicitly encouraged, employers may need to adopt AI codes of practice that protect their employees from their own tendencies.

The gains are real

Unlike other studies, which have suggested few companies have benefitted from the effects of AI on their buisiness, or that using AI can actually increase the amount of time spent on a task, even if it felt like it was lessened, this study is quite unambiguous: the AI helped employees do more, with the caveat that the increased productivity often came with an "intensification," of the workplace.

By having an AI on hand to answer simple questions and coach employees into tasks they weren't familiar with, many of them felt they could take on new tasks.

"Product managers and designers began writing code; researchers took on engineering tasks; and individuals across the organization attempted work they would have outsourced, deferred, or avoided entirely in the past," researchers said.

Employees felt tasks outside their usual wheelhouse were accessible and attainable because AI held their hand while they learned how to do it. This increased the scope of many employees' roles. But it also meant they were taking on tasks they really weren't qualified to do, performing work at times and places where they might otherwise be resting, and felt capable of multitasking in ways that meant they were juggling more varied tasks.

Doing someone else's job

The big fear for many workers is that AI will replace their role. While there is some real concern, it's likely overstated. Perhaps instead, workers should be concerned about their roles not being taken by AI, but by their colleagues who are using AI to expand their capabilities.

The issue, however, is competence. Although the Harvard study suggests that workers did indeed step outside their lane to produce more, especially where they would typically have relied on a colleague or subordinate for a task, it often meant producing lackluster work. This was particularly prevalent where workers without programming experience were vibe-coding solutions to solve problems, only to informally request engineers' help to finish partially completed pull requests.

Although workers often cited their AI use as "just trying things," the over-confident expansion of their own responsibilities meant that they often required additional help to do a job that would have been better off being handed off to a professional, or for headcount to be increased to handle the additional responsibility.

There's always time for a prompt

Prompts are quick and easy assemble for many, but the responses often take time to read through or action. That meant workers who used AI a lot would often find ways to slip in an extra prompt just before they went off to lunch, or while working on other tasks. This additionnal multi-tasking made workers feel busier and more productive. They were able to handle more tasks at once because the AI was often doing something in the background.

But that added to workers' mental load. Workers were forced to jump between multiple tasks, playing havoc with their attention span. It demanded frequent checking of AI outputs, and that sometimes led to tasks lying incomplete for longer as they joined the carousel of ongoing tasks that were not as straightforward as they might have been, had they been handled by a professional whose sole role is that kind of job.

Downtime was impacted too. Workers would throw out prompts while they ate their lunch or while waiting for the coffee maker to brew. This conversational style blurred the lines between what was work and what was socializing, meaning that over time, workers often filled what should be rest time with more work.

Playing into the 24/7 access culture for many workers, employees in this study often found that they struggled to relax or feel as rejuvenated by time away from work, because the pressure of one more easy prompt was always there.

Although the study doesn't take this speculative leap, I wonder if AI use and its instant gratification of conversational-style responses mean that workers are using it a little like social media. They chase the dopamine hit of a response and the added satisfaction of completing a task, even if that means taking on more cognitive load than they can comfortably handle.

Someone should do a study on whether AI response times have been designed to be addictive, as well as give the tool enough time to produce an effective response.

Ending the vicious cycle

The study's hypothesis that AI work begets more work proved accurate. Workers were faster and more productive with certain tasks, meaning they often took on more to fill that time, often relying on AI to do so. That reliance introduced additional work for them and their colleagues, and spread those tasks across a broader timeframe, meaning some work took longer, introduced more errors, and required additional time, effort, and mental energy.

The effect was workers who felt like they were getting a lot more done were more often getting a little more done, and ultimately found themselves burning out from the whole process.

And that's within a company that didn't force AI on its employees. While employers might see workers pulling themselves up by their AI bootstraps as at least partially positive, the long-term negative effects could be dire.

Errors may mount, experienced workers may leave, and businesses may find it increasingly harder to spot what's actually beneficial and what's just AI-induced busy work.

To combat this, researchers suggest employers should adopt AI codes of practice, whether they encourage AI use or not. This should involve dedicated pauses that force workers to reflect on how a task is being managed and if it could be handled differently. This should go hand in hand with focusing on a single or limited number of tasks at one time.

To help maintain attention span and avoid constant work creep, prompts could be sent out in batches, and their responses actioned within a specific timing window. Instead of constantly receiving notifications, workers could be sent a report on AI activity and responses once an hour, instead of at the rate of conversation.

The authors also emphasised the importance of human connection. Lunch breaks, water-cooler chats, employer check-ins and wellbeing meetings. These spaces should be protected from AI creeping into that time.

"By institutionalizing time and space for listening and dialogue, organizations re-anchor work in social context and help counter the depleting, individualizing effects of fast, AI-mediated work," the study concluded.

3 weeks ago

26

3 weeks ago

26

English (US) ·

English (US) ·