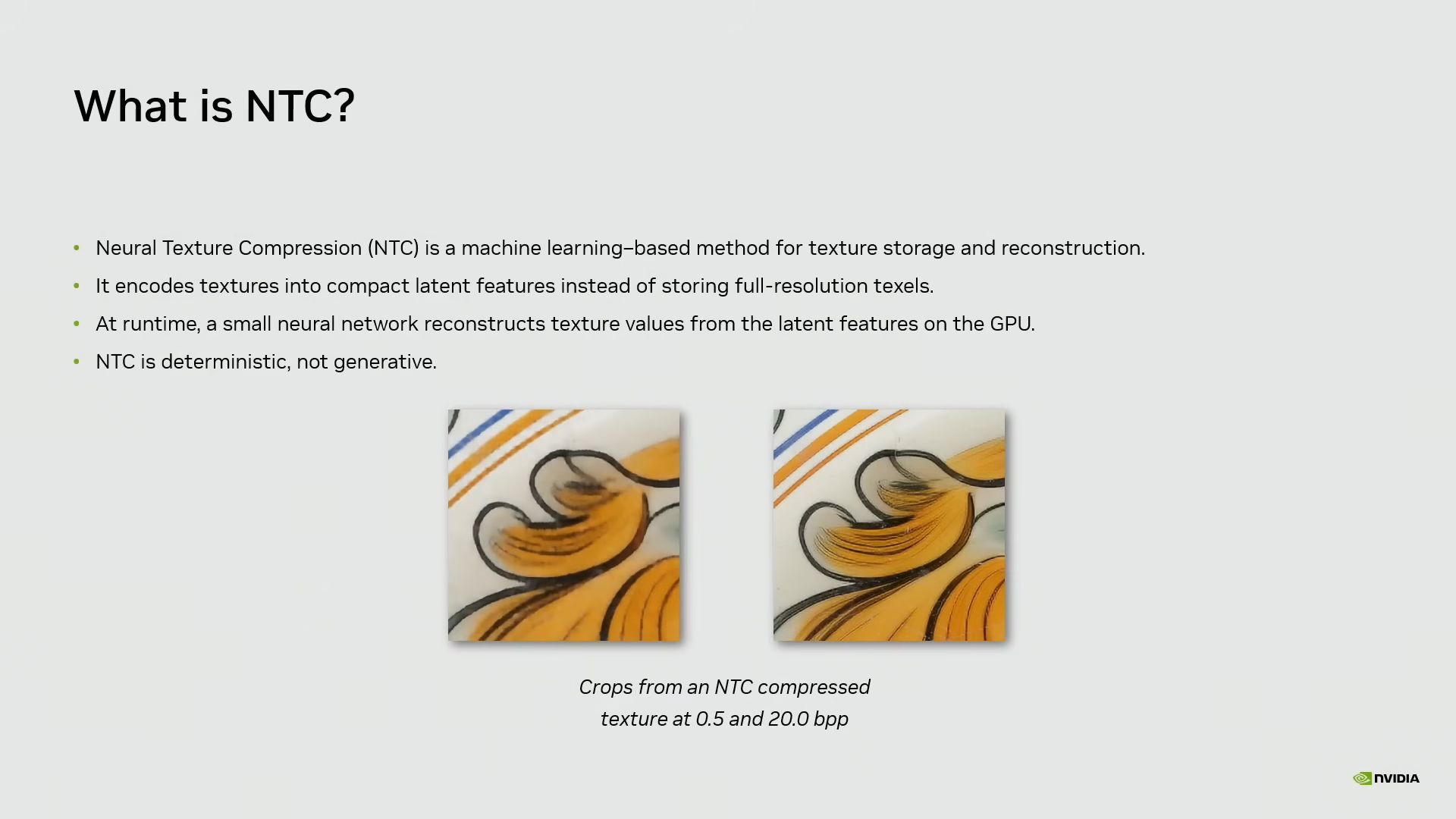

As games become more complex and photorealistic, the industry has increasingly relied on upscaling technology to meet surging hardware demands. One of the biggest issues arising from this subpar optimization is VRAM usage, which has risen sharply over the past few years. To combat this, Nvidia has developed a technology called "Neural Texture Compression" (NTC), which was brought up again in today's GTC talk. The best graphics cards will be able to leverage Nvidia's NTC technology.

Go deeper with TH Premium: GPUs

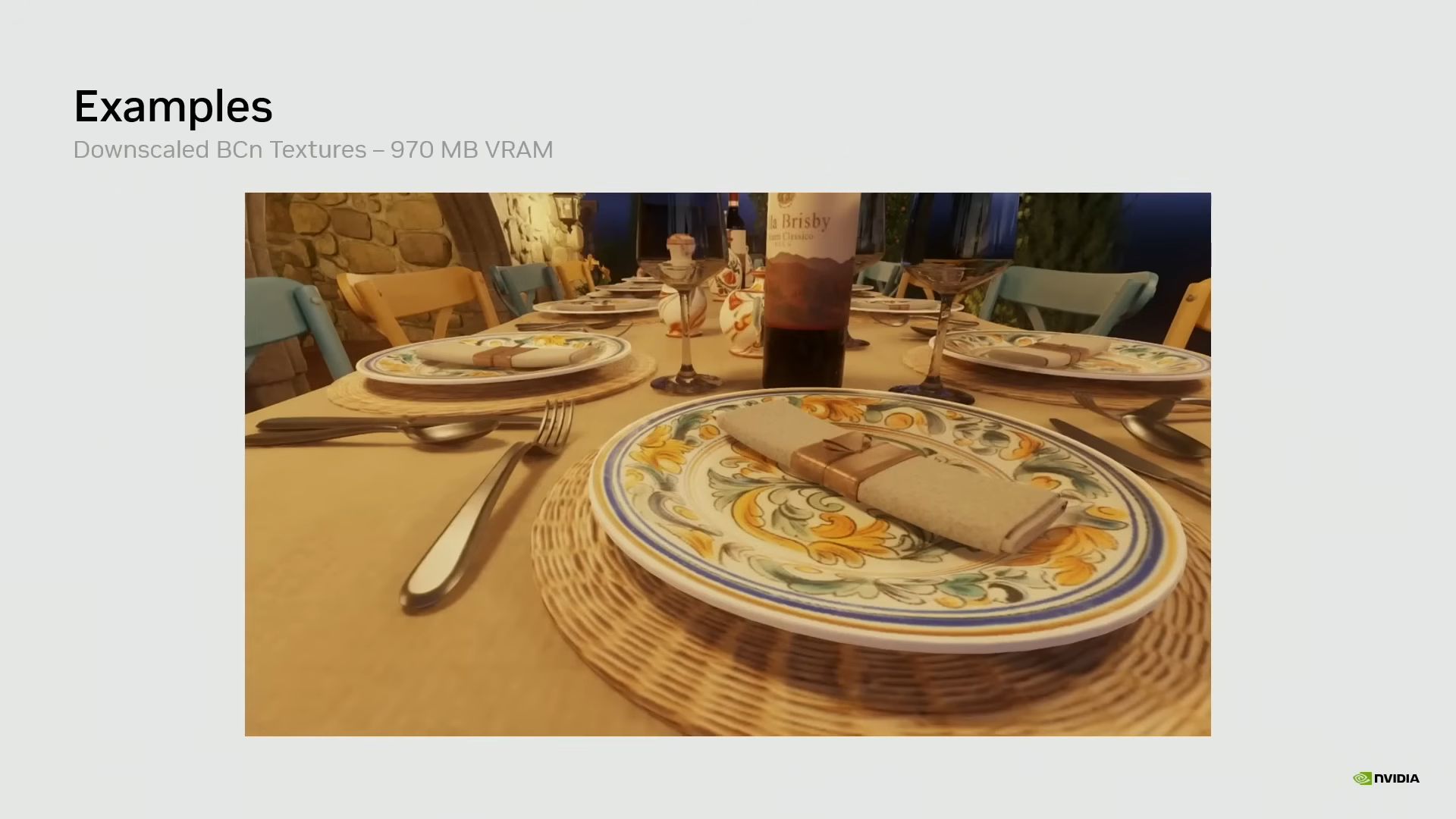

In the example below, Nvidia ran a Tuscan Villa Scene that was consuming 6.5 GB of VRAM with standard block compression, but switching to NTC reduced that to just 970 MB, and the image looks identical. Previously, another demo from the company showed a flight helmet with 272 MB of uncompressed textures — block compression cut that down to 98 MB, but NTC reduced it to just 11.37 MB, about 24x less than the original.

Article continues below

Introduction to Neural Rendering - YouTube

The company also demonstrated Neural Materials, following the same concept: letting a neural network evaluate and decompress material texture data instead of relying on computationally expensive BRDF math. Typically, multiple texture maps are stacked for a material, and the GPU must calculate how light interacts with each layer simultaneously in the rendering pipeline.

Neural Materials just asks the neural network how the light will react in that scenario and shades the pixel accordingly. The neural network is trained on all the texture data, so it already knows the result given the light and angle. As such, in the demo scene below, Nvidia achieved up to 7.7x faster render times at 1080p resolution with no loss in image quality.

NTC is so efficient because it uses matrix acceleration engines, which are a separate hardware block in modern GPUs, so base performance isn't affected. Nvidia calls them Tensor Cores, Intel calls them XMX engines, and AMD calls them AI accelerators. This is where upscalers like DLSS, FSR, and XeSS also live, as they reconstruct a low-res frame into a higher-resolution output, so it's part of Nvidia's neural rendering ambition.

The concept of neural rendering doesn't have the widest acclaim in the community yet, and the word "neural network" might lead you to think this is just more AI slop. It's actually the opposite, and one of the better uses of AI since it's not generative at all. NTC will be trained only on the specific set of textures it needs to reference during game development, so there's no chance of hallucination.

Textures by far consume the most VRAM in any game, so any technique to keep them in check is a welcome addition. That said, it's important to note that this isn't exclusive to Nvidia, as Microsoft has standardized it as "Cooperative Vectors" in DirectX. Intel has previously shown off its own demo with noticeably better textures compared to block compression. AMD last talked about the tech in 2024, but it's likely onboard the mission as well.

Currently, no game supports Cooperative Vectors or Nvidia's Neural Texture Compression, but we should start to see it implemented soon, given the industry's trajectory. AI has become the answer to seemingly every age-old problem, and corporations are inventing new ways to incorporate it where it doesn't belong. Innovations like NTC, however, show that it can be implemented tastefully to make an actual, meaningful difference.

Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

5 hours ago

9

5 hours ago

9

English (US) ·

English (US) ·