FEATURE In an unassuming three-story office building in Cupertino, California, engineers from Amazon Web Services are busy trying to make networking inconspicuous.

They work in windowless hardware development labs at the center of the structure, surrounded by a ring of office cubicles that afford a view of scarce parking spaces and perimeter tree cover.

Their latest project, which The Register and several other publications agreed not to discuss in advance of the pending official announcement, may get some attention. But their networking ambition differs from the promotional goals of the AWS communications team.

A network should be like a light switch, said Matt Rehder, VP of core networking at AWS, during a tour of AWS's Torre Avenue lab in late April. It should be something that just works.

"No one really cares about the network at the end of the day," he said. "It serves a function. You care about it when it's broken. But otherwise you want it to be out of your way. So that's been our mental model for the last 15 years – how do we get the network out of the way?"

Networking has been broken for AWS since 2010, at least from a business perspective. James Hamilton, SVP and distinguished engineer at Amazon, said as much in a presentation titled, "Datacenter Networks are in my Way."

"This was in the very early days of the cloud," Rehder explained. "But even at that time, with the growth of bandwidth we were seeing, it was very clear that the way networks had been built wasn't going to scale into the future and that something fundamentally different had to happen."

Hamilton objected to the vertically integrated networking stack that slowed innovation and kept margins high for network equipment makers. He likened it to the mainframe business model, and said he preferred the server business model, where there's competition and open source software.

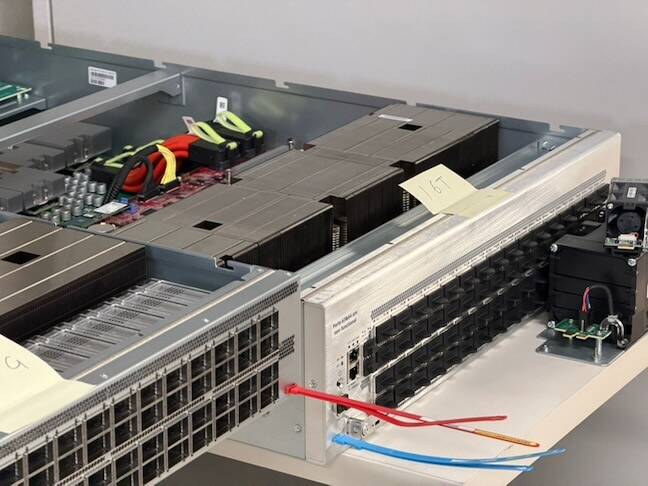

Networks for AWS, Rehder explained, consist of three primary types of hardware: network devices, including switches and routers, built on application-specific integrated circuits (ASICs) that forward data from one port to another; optical transceivers, which send and receive light signals via laser; and cabling, which may be fiber-optic glass or copper wire.

When AWS was being built out a decade and a half ago, the cloud biz decided it needed to take control of its network technology. "It's so foundational to what we built," said Rehder. "And so we decided we needed to start developing our own hardware and developing our own software."

The company started small, working with third parties to develop network devices, iterating on that until the footprint of its homegrown technology covered its datacenters, its core network, and its border network.

What's unique about AWS, Rehder said, is that other network providers typically use one type of switching ASIC for their aggregation network, another for their core network, and another for their border network, because each has different needs in terms of memory, performance, and throughput.

"They would all use different silicon for different switches," he said. "We use one for everything."

The reason, he explained, is simplicity. "If you have one thing and you overly invest in making it really good, you're putting all of your energy into that hardware and software making it super-reliable," Rehder said. "It also helps us scale the network because when we're managing our supply chain or figuring out how to scale, we're not trying to balance all these competing SKUs."

That does create some challenges, he admitted.

"There's a good reason people use different types of switching ASICs because there is different functionality," Rehder explained. "And that's where controlling our own software really comes to play. We've effectively been able to remove the need for that custom silicon by being smart with our software and just finding creative ways to keep what needs to happen on the device in the hardware as simple as possible while still delivering great performance and functionality for our customers."

The switches have specialized ASICs to maximize the efficiency of packet routing. The ASIC can move many millions of packets per second from one port to another, without going through a CPU.

So now AWS has its own hardware, running on its own software, a version of Linux called NetOS. The company's current homegrown switch is capable of transmitting 51.2 terabits per second of traffic, via 64 ports operating at 800 gigabits per second. Within the next 12 months, its next generation switch will provide 102.4 terabits per second via 64 ports running at 1.6 terabits per second.

"Everything runs the same operating system as well, which is super powerful for us," said Rehder. "From a security perspective, it means the code's all ours. We can scan it, we can fix bugs … we can patch and update our devices very, very regularly."

Owning everything has allowed AWS to do some difficult things. As an example, Rehder pointed to the high precision time network that AWS released a few years ago. That required unique hardware and unique software that integrates with the company's Nitro server chip. The technology, he said, allows applications such as high-frequency trading and distributed databases to operate across long distances.

"The only way we could have achieved that is because we were able to bring our own hardware and our own software and then do something that was unique to solve some AWS customer problem," he said.

- Bot her emails: most modern phishing campaigns are AI-enabled

- Where to buy a non-Apple, non-Google smartphone

- Microsoft releases first big update after Nadella's vow to 'win back fans'

- Cursor-Opus agent snuffs out startup's production database

"The bigger problem we're trying to solve is how do we keep all the server clocks in sync," said Satish Vangala, director of network product development at AWS. "We had to build a dedicated network to ensure that we have the timing synchronization at microsecond accuracy for all the servers in our datacenter."

AWS's network consists of about two million devices and about 50-60 million optical links and transceivers. It includes about 20 million kilometers of terrestrial and subsea fiber at the moment, which Rehder says is enough to reach from the Earth to the Moon and back 25 times. And that's just cable between buildings. If you measure the cable within its datacenters, the amount of cable is maybe an order of magnitude higher.

One of the ways AWS has been improving its network recently has been through the deployment of hollow core fiber, which has been around for a while but only recently became something that could be manufactured at scale.

With normal fiber optic cable, light-based networking signals travel through the glass fiber. Hollow core fiber consists of a glass tube surrounding air or vacuum, which offers less refractive interference and allows light to travel at a speed closer to its natural limit.

The result is a 30 percent reduction in latency, which Rehder says is significant, particularly for datacenter placement. He explained that when an AWS region is built and has, for example, three availability zones, the datacenters have to be near each other but not too close.

For subsequent expansion, latency between structures constrains building placement – it has to be low enough that customer applications in different datacenters within the same region behave as if they were located in the same place.

So hollow core fiber expands the potential resources available to AWS datacenters – in terms of land and power – by allowing structures to be placed within a larger radius.

"We do have hollow core deployed in a few places now," said Rehder. "It's more expensive than the traditional fiber. But if it enables us to improve latency or better serve customers, in the grand scheme of things, the cost of the fiber is small when you look at the entire cost of datacenters, servers and network devices and everything else."

Network improvements like hollow core fiber are necessary because the demand for bandwidth keeps growing.

Rehder said that the need for bandwidth has been growing throughout his career but more so in the last four or five years as generative AI services have taken off.

"The accelerated server types tend to have three to four times the bandwidth needs of the more traditional CPU-based server types," he explained. "We're still using the same hardware and we're using the same software, but we're packaging it together in a different way."

To get more servers with more bandwidth under one network in a datacenter with less latency between servers, AWS uses fewer networking devices in the path between two servers.

"So the UltraCluster network lets you scale out to be much larger than the other traditional network that we use," Rehder said. "Effectively a different network topology. Instead of having seven network devices in the path between any two points, it has five network devices in the path between the two points."

As AWS has built more capacity and expanded its network, the scale of its operations has demanded innovation. Rehder explained that issues like physical cabling infrastructure – the number of connectors required and how they can be optimized for ease, speed of deployment, and reliability come into play.

"When you're into fiber optic, it's not like the Ethernet port you plug in," Rehder explained. "With fiber optic cabling, because you're sending a signal, even though you may plug the cable in right, if it's not seated perfectly or if it's dirty at all, that can obscure the signal and that can reduce the reliability of it. It's a major challenge when you're operating at high scale and the latest technology is on the edge of what you can cleanly send and receive and everyone's trying to deploy that."

"That's a big area of focus for us – not only building all this capacity but making sure it can be built quickly and then, once it's built, it runs extremely reliably," he said.

One of the ways AWS tries to ensure a smooth setup is with a device called a firefly, a connector that looks a bit like an alien from the arcade classic Space Invaders. Its function is to verify a fiber signal path so that's not a variable when a new endpoint gets added.

"Each of these will have a send and a receive," said Rehder, "and this basically takes the send and receive and loops it, so that when we get the fiber into the datacenter, it'll be connected to a network switch at the other side and it can send a signal and if you see the signal come back to itself, you can make sure the fiber path is clean. So when the client comes in you can just plug it in and it's good to go."

When the network works – more than 99 percent of the time, usually – you may not even notice the engineering. ®

2 hours ago

6

2 hours ago

6

English (US) ·

English (US) ·