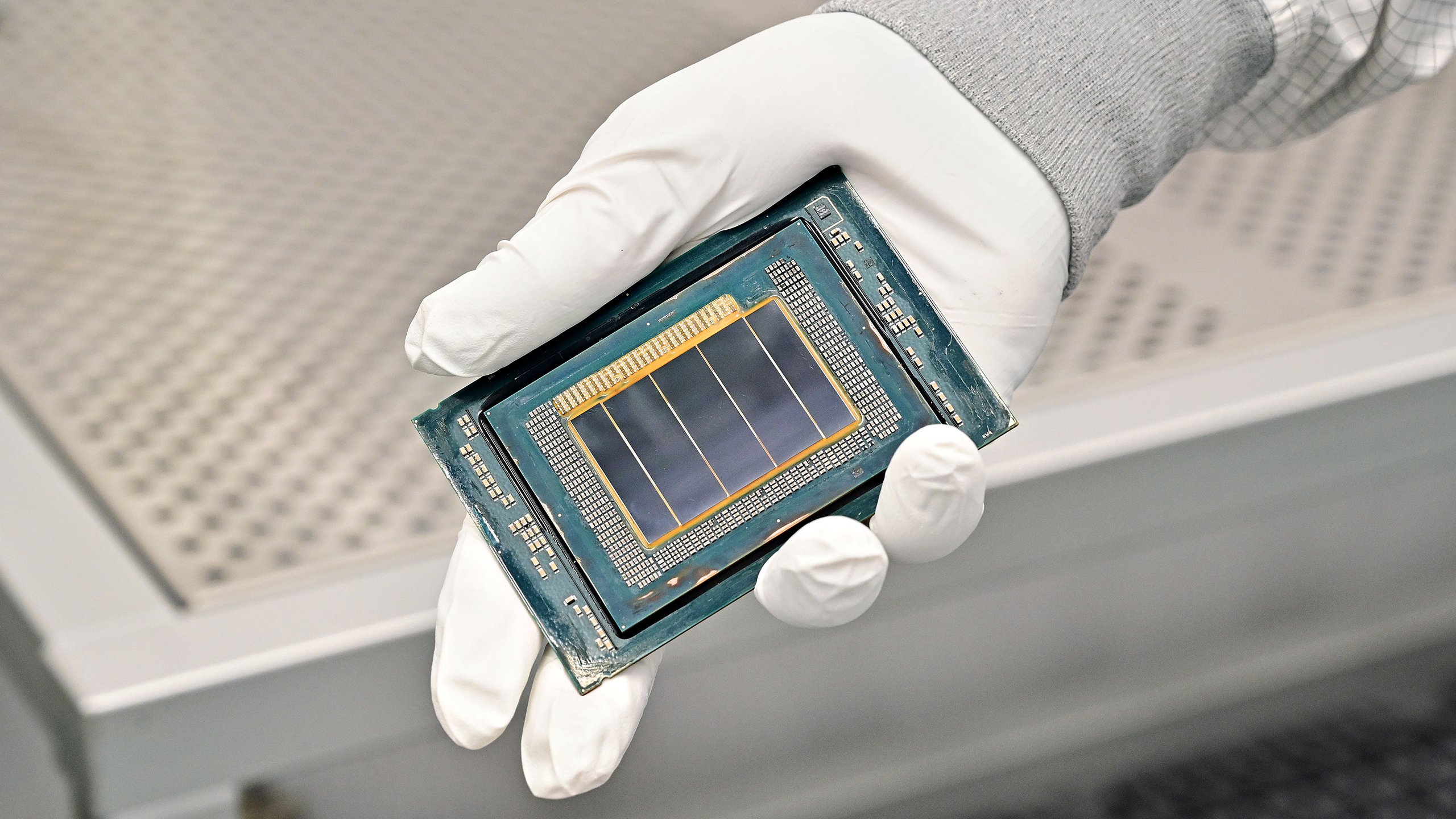

Intel said during its Q1 2026 earnings call that the ratio of CPUs to GPUs deployed in data centers could tighten by as much as 1:1 in agentic scenarios as AI workloads shift from training to inference. Currently, one CPU is needed for every four to eight GPUs in an AI server, but with Agentic AI, that shifts dramatically to one CPU per GPU. That shift has driven server CPU prices up by as much as 20% since March, with Intel already having confirmed back in October that it’s prioritizing data center chip production over consumer CPUs to meet demand it can’t currently fulfill.

One of the standout details from the call was how CFO David Zinsner described server deployments as shifting, with the ratios of CPUs to GPUs in data centers having already moved from 1:8 to 1:4. He added that as workloads continue migrating towards inference and agentic AI, that ratio could converge to 1:1 or even tilt further in favor of CPUs. “As you think about the growth rate now going forward, it’s [CPU demand] going to become a significant part of the AI [total addressable market],” Zinsner said.

At 1:8, a rack filled with eight GPUs needed a single server CPU to manage orchestration and data handling. But at 1:4, that same rack needs twice as many CPUs, and eight times as many at 1:1. Intel is already supply-constrained on Xeon, with Zinsner stating during the call that the unmet demand “starts with a B. So it’s meaningful.” Server CPU lead times are currently around the six-month mark, with both AMD and Intel acknowledging the demand spike at last month’s Morgan Stanley conference, where Zinsner called the CPU “cool again.”

Article continues below

Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

5 days ago

14

5 days ago

14

English (US) ·

English (US) ·