Serving tech enthusiasts for over 25 years.

TechSpot means tech analysis and advice you can trust.

Forward-looking: Researchers from the University of California, Berkeley, and the University of California, San Francisco, have developed a brain-computer interface system capable of restoring naturalistic speech to individuals with severe paralysis. The innovation, which addresses a long-standing challenge in speech neuroprostheses, was detailed in a study published in Nature Neuroscience and represents a significant leap forward in enabling real-time communication for those who have lost the ability to speak.

The research team overcame the issue of latency – the delay between a person's intent to speak and the production of sound – by leveraging advances in artificial intelligence. Their streaming system decodes neural signals into audible speech in near-real time.

"Our streaming approach brings the same rapid speech decoding capacity of devices like Alexa and Siri to neuroprostheses," explained Gopala Anumanchipalli, co-principal investigator and assistant professor at UC Berkeley. "Using a similar type of algorithm, we found that we could decode neural data and, for the first time, enable near-synchronous voice streaming. The result is more naturalistic, fluent speech synthesis."

The technology holds immense promise for improving the lives of individuals with conditions such as ALS or stroke-induced paralysis. "It is exciting that the latest AI advances are greatly accelerating BCIs for practical real-world use in the near future," said Edward Chang, a neurosurgeon at UCSF and senior co-principal investigator of the study.

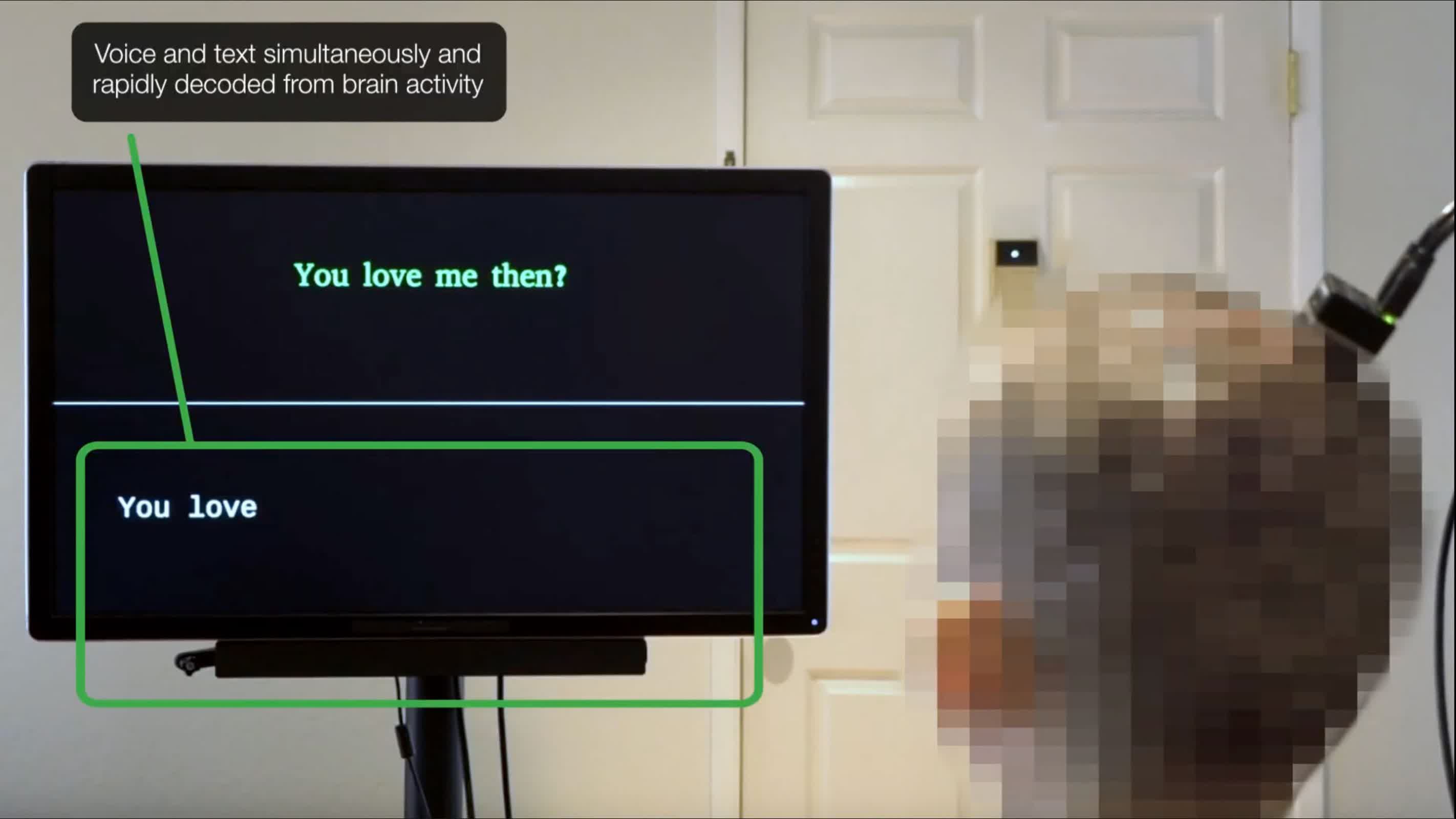

The system works by sampling neural data from the motor cortex – the part of the brain responsible for controlling speech production – and using AI to decode this activity into spoken words. The researchers tested their method on Ann, a 47-year-old woman who has been unable to speak since a stroke 18 years ago. Ann participated in a clinical trial where electrodes implanted on her brain surface recorded neural activity as she silently attempted to speak sentences displayed on a screen. These signals were then decoded into audible speech using an AI model trained with her pre-injury voice.

"We are essentially intercepting signals where the thought is translated into articulation," explained Cheol Jun Cho, a UC Berkeley Ph.D. student and co-lead author of the study. "So what we're decoding is after a thought has happened – after we've decided what to say and how to move our vocal-tract muscles." This approach allowed researchers to map Ann's neural activity to target sentences without requiring her to vocalize.

One of the key breakthroughs was achieving near real-time speech synthesis. Previous BCI systems had significant delays – up to eight seconds for decoding a single sentence – but this new method reduced latency dramatically. "We can see relative to that intent signal, within one second, we are getting the first sound out," noted Anumanchipalli.

The system also demonstrated continuous decoding capabilities, allowing Ann to "speak" without interruptions.

Despite its speed, the system maintained high accuracy in decoding speech. To test its adaptability, researchers evaluated whether it could synthesize words outside its training dataset.

Using rare words from the NATO phonetic alphabet like "Alpha" and "Bravo," they confirmed that their model could generalize beyond familiar vocabulary. "We found that our model does this well, which shows that it is indeed learning the building blocks of sound or voice," said Anumanchipalli.

Ann herself noted a profound difference between this new streaming approach and earlier text-to-speech methods used in prior studies. According to Anumanchipalli, she described hearing her own voice in near-real time as increasing her sense of embodiment – a critical step toward making BCIs feel more natural.

The researchers also explored how their system could work with different brain-sensing technologies, including microelectrode arrays (MEAs) that penetrate brain tissue, and non-invasive surface electromyography (sEMG) sensors that detect muscle activity on the face. This versatility suggests broader potential applications across various BCI platforms.

The team is now focused on further enhancing and optimizing their technology. One area of ongoing research involves enhancing expressivity by incorporating paralinguistic features such as tone, pitch, and loudness into synthesized speech. "This is a longstanding problem even in classical audio synthesis fields," said Kaylo Littlejohn, another co-lead author and Ph.D. student at UC Berkeley. "It would bridge the gap to full and complete naturalism."

While still experimental, this breakthrough offers hope that BCIs capable of restoring fluent speech could become widely available within the next decade with sustained investment and development.

The project received funding from organizations including the National Institute on Deafness and Other Communication Disorders (NIDCD), Japan Science and Technology Agency's Moonshot Program, and several private foundations.

"This proof-of-concept framework is quite a breakthrough," said Cho. "We are optimistic that we can now make advances at every level."

English (US) ·

English (US) ·