Running LLMs locally on your GPU requires a lot of VRAM, which can drive the rig's cost up exponentially these days. Amidst the ongoing AI boom, the best value lies in older, often forgotten silicon that's still capable, which is exactly what YouTuber Hardware Haven found. He took an Nvidia V100 server GPU with an SMX interface, which is similar to using a socketed processor, and converted it to a standard PCIe bus, which plugged into a consumer motherboard. It ended up performing quite well for its stature (and cost), even against modern SKUs.

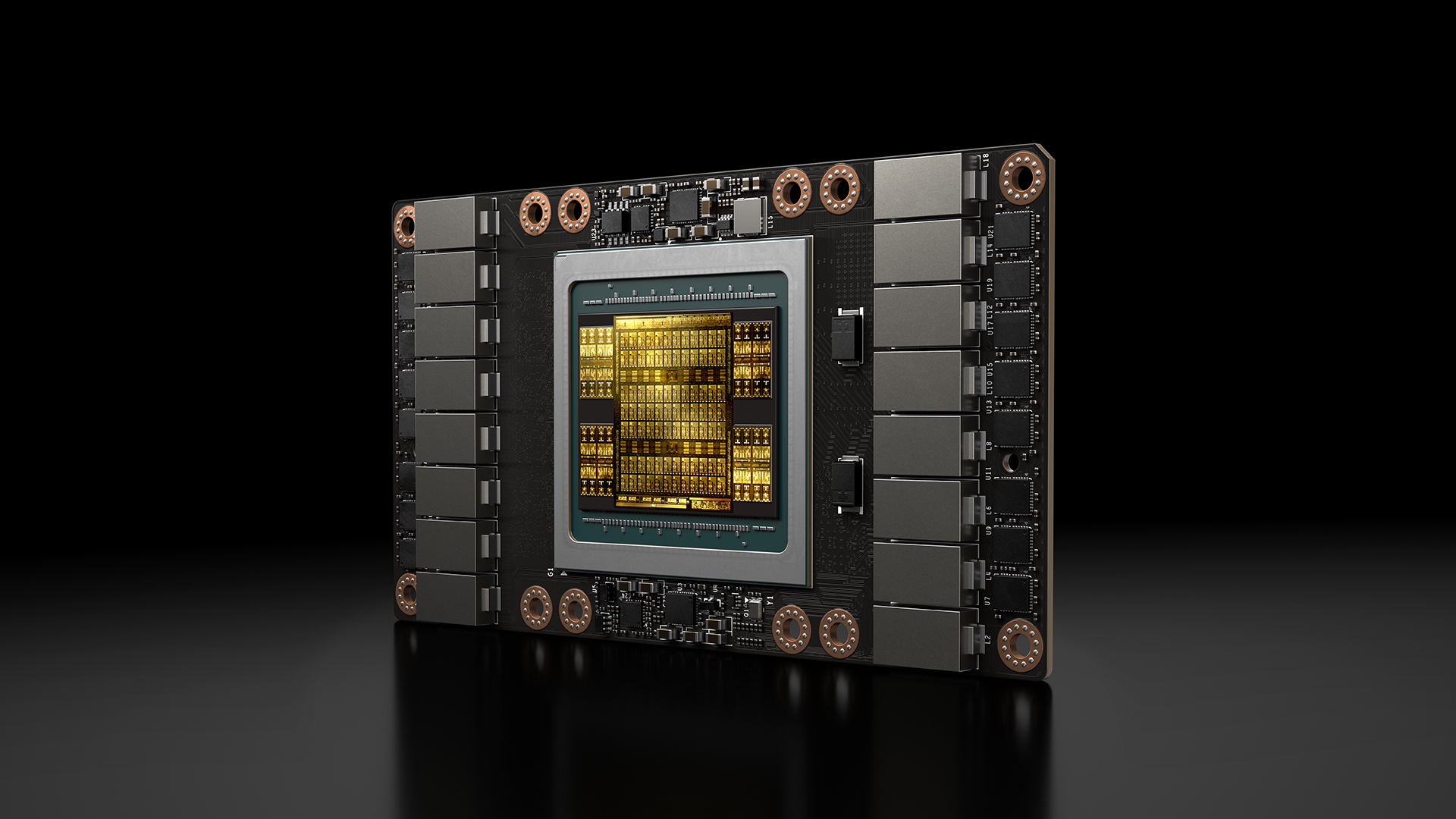

The contraption begins with an Nvidia Tesla V100 AI GPU that uses the SMX2 socket and is designed for rack-scale deployments. The SMX interface is a mezzanine-based connector that mounts GPUs flat against a specialized baseboard, similar to a CPU socket, and the GPU is then screwed down to the baseboard. The host was able to acquire this GPU for just $100, and the accompanying SMX-to-PCIe x16 adapter was also around $100, bringing the total cost of the setup to $200. The V100 comes with either 16 or 32GB of HBM2 (we're working with 16GB here, sporting 900 GB/s of bandwidth), and it's based on the Turing architecture.

This Ridiculous $200 AI GPU Shouldn’t Be This Good - YouTube

Both cards have 16 GB of VRAM, and the RX 7800 XT is even newer with supposedly more efficient silicon, but then again, Nvidia is the gold standard for software support in these benchmarks. So, the host switched to an RTX 3060 12 GB (the best Nvidia GPU he had on hand) to compare against the V100, which is also built on newer Ampere architecture.

Running Google's gemma4: e4b, the V100 topped out at 108 tokens per second, while the 3060 12 GB only managed about 76 tokens per second, but it did so consuming less power — 293W on the V100 versus 235W on the RTX 3060. If we calculate tokens per watt, that comes out to around 0.37 for the V100, slightly more efficient than the 0.33 tokens per second per watt on the 3060.

Power-limiting the V100 to 100W (it comes with 300W out of the box) dropped the power draw to 170W in the same test, while still producing 95 tok/s. To make the comparison fair, the YouTuber also limited the 3060 to 100W; it ended up consuming 171W and producing just 68 tokens per second. So, with both new results, the V100 achieves an efficiency score of 0.55 tokens/s per watt, while the 3060 12 GB was stuck at 0.39 tokens/s per watt.

Even though the V100 proved much more efficient overall, despite being several generations old, its idle power draw is the real crux. It sips 45W just sitting doing nothing, compared to 35W on the RTX 3060. Finally, the YouTuber also tested Frigate NVR, which ended up performing really well on the V100, better than the RTX 3060, but consumed more power, as you'd expect.

The host's previous setup for Frigate was an Intel-based N100 mini PC that struggled to ever detect his dog on mobilenetv2, but the V100 was able to identify it instantly. Monitoring just two cameras made the V100 pull over 100W, though; the RTX 3060 was similar in this regard, while the older N100 consumed only 26W when operating six different cameras. That marks the end of the benchmarking.

This V100 experiment turned out to be a success overall, but the virality of the original video and the fact that we're writing this article mean these bad boys are about to go up in price. So, if you're interested, make sure to grab one before it's too late; the YouTuber found it for just $100 on eBay, and the PCIe adapters for early SMX sockets are cheap enough as well. The 32GB variant of the V100 goes for $500, however.

Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

3 weeks ago

21

3 weeks ago

21

English (US) ·

English (US) ·